Late last year I began experimenting with "Vibe Coding" using Claude and MCP (Model Context Protocol) Tools, I've explored this new frontier of human-computer collaboration, where the most critical skill hasn't been mastering syntax or memorizing APIs, but communicating clearly and logically in an ongoing dialog with an LLM.

Read MoreA Bicycle for the Mind

Amid the chaos of a world in crisis, I’ve found hope in an unexpected place: coding. With tools like Claude.ai and MCP, I’ve been building a web app to help food pantries serve their communities better—automating inventory, breaking language barriers, and streamlining processes. This isn’t just about code; it’s about turning anxiety into action, using technology to create something meaningful. If you’ve ever wondered how AI can amplify human effort, this is a story for you.

Read MoreVideo Lecture: 3D Modeling Basics for Beginners – Techniques, AR Tips, and Intro to AI Tools

I have some exciting news! October 23rd, 2024, I was once again invited to guest lecture at CMU School of Design. I decided to follow up with a recorded version to share. In this recording, made after the original lecture session, I cover the essentials of 3D modeling with a focus on beginner-friendly techniques. You'll find practical insights into mesh modeling, workflow tips for Blender, and an introduction to preparing models for augmented reality. The full lecture video is embedded below, followed by detailed notes that offer a step-by-step breakdown of theory and techniques for anyone new to 3D design. Dive in, explore, and start building your own 3D modeling skills.

Principles of Mesh Modeling

Note on Mesh Modeling Focus—Or Why This Lecture Focused Primarily on Mesh Modeling:

Meshes are the standard 3D model type used in real-time 3D engines—like Unity, Unreal, and virtually every AAA video game title in the last 30 years, going all the way back to Quake, by id Software in 1996.

Key Principles:

Use Quad Faces Whenever Possible: Design your shape faces with quads instead of triangles and ngons.

Reason: Quads are infinitely divisible, making it easier to adjust geometry resolution as needed. Tris and Ngons are not as flexible, which can lead to undesirable artifacts and poor topology.

3D games primarily use triangles (tris) instead of quads because triangles are the simplest polygon shape and always planar (a flat surface), making them computationally faster to render in real-time on limited hardware, which was crucial for early gaming systems underpowered computer hardware. Essentially, triangles require less processing power to calculate and display on screen compared to quads, which have more vertices and edges.

On modern computer hardware we can get away with more complex geometry, and it's generally a better trade-off to build mesh models from quads. That is, the computational costs are vastly outweighed by the benefits of evenly divisible face geometry and more manageable topology. Lastly, quads are easily converted into tris, by producing diagonal edges between the four vertices.Work from the Lowest Possible Polygon Count: Always start with the lowest polygon count (i.e., resolution) for your model. You can increase resolution later with subdivision modifiers, but it's not as easy to reduce the resolution later.

Reason: Editing a high-resolution mesh is more difficult than working with a low-resolution one, which offers greater control and flexibility. It also takes much more processing power and memory, which will slow down Blender and increase the risk of crashes.Keep Base Shapes Simple: Keep your base shapes as simple as possible. When adding details, create those elements as separate objects. When you hit a milestone, consider duplicating a model or a collection of models to a new instance for further refinement.

Reason: This approach makes 3D modeling more manageable, allowing for easier adjustments and maintaining clean geometry.Use Modifiers and Non-Destructive Editing Whenever Practical: Designing a symmetrical shape? Cut it in half and use a Mirror Modifier to cut your editing time in half. Keep in mind that the most complex designs can ultimately be derived from very basic shapes: Spheres, Cones, Toruses, and Cubes.

Work From Reference Images, Even If Just A Few Basic Sketches: Press Shift + A to open the Add menu. Navigate to Image > Reference. Select the image file you want to use from your computer. The reference image will be added to your 3D Viewport, where you can position, scale, and rotate it as needed for your modeling task.

Build The Overall Form First, and Then Separate into Smaller Objects: This will ensure that your designs are cohesive and edges are properly aligned. When you're ready to divide into separate objects, duplicate the objects into a new Collection.

Experiment, Tinker, Explore, and Start Over: You're unlikely to get the design right on the first attempt. It's often necessary to work through the problem, and then start over from scratch once you've had enough time to explore the form. Reason: Your second draft will almost certainly be better than the first.

Blender Quality of Life Recommendations:

Save Your Project Files Early and Often: Use Blender's "Save Incremental" (⌥+⌘+S) (Option + Command + S) to manage version control. Doing this will give you the freedom to fearlessly tinker and explore (as mentioned in the previous point) before settling on a final design.

Crank Up The Number of Undo Steps: Open Edit from the top menu. Select Preferences to open the Blender Preferences window. In the Preferences window, click on the System tab. Scroll down to find theUndo Steps setting.

Increase the value (the default is 32). If you have enough system memory, set it to 256 for more flexibility in undoing actions. Close the Preferences window to save your changes.

Consider Using A Material Library: Blender has a basic built-in material library, but it's not very useful. Look into large libraries, such as PBR Material Asset Library + OneClick Add-on for Blender (https://shardsofred.gumroad.com/l/CfOnY). Creative Commons License (CC0) materials can be used for basically anything, and will save you time.

Remember to Perform a UV Unwrap on Your Model Geometry for Best Results When Texturing: The most realistic textures in the world won't help you if your model doesn't have good UV Mapping. Remember the chocolate Santa Claus example? Proper wrapping is essential for creating realism with your models. https://docs.blender.org/manual/en/latest/ modeling/meshes/uv/applying_image.html

Recommended Extensions and Add-ons:

VDM Brush Baker: Helps you create and bake Vector Displacement Maps directly in Blender.

Bool Tool: Boolean operations for complex shape creation.

Node Wrangler: Enhances node editing management.

Rigify: Automated rigging solution for character animation.

Loop Tools: Useful for organic modeling (with some bugs appearing

in Blender 4.2—be sure to keep this add-on updated!).

Other Useful Add-ons: Auto Mirror, F2, Extra Mesh/Curve Objects, Extra

Grease Pencil Tools, Copy Attributes Menu, and MeasureIt.

Bonus: Need furniture? Most of IKEA's catalog of products have 3D models available. Search for "IKEA" under Extensions and you can easily search and import 3D models into your scenes.

Note: Ensure 'Allow Online Access' is enabled in Blender's System Preferences for add-on updates.

Create Augmented Reality Experiences for iOS with Xcode Developer Tools, Reality Composer, and USDZ File Format

Once you've finalized your form, added necessary details, and applied your materials, you should be ready to export your model.

Step-by-Step Instructions for Preparing 3D Assets for Export to USDZ:

Duplicate Your 3D Assets and Collections: Create a new instance of your 3D assets specifically for export.

Apply All Transforms: Hit A to select all visible objects, then press ⌘ + A (Command + A) and select All Transforms to apply.

Apply All Modifiers: Apply all modifiers in the same order they were added to each model—except for subdivision, as tessellation data can (usually) be included without applying it directly to the models.

Join All Components: Hit A to select all visible objects, then press ⌘ + J (Command + J) to perform a join operation.

Export the File: Go to File > Export > Universal Scene Description (usd*).

Configure Export Settings:

Include: Check Visible Only and Selected Only.

Blender Data: Select Custom Data.

Namespace: Use the default setting (UserProperties).

Blender Names: Enable this option.

File References: Set to Relative Path.

Convert Orientation:

Z = Forward Axis

Y = Up Axis

Note: Many other 3D tools, including Xcode's tools, interpret 3D models with a different axis orientation than Blender. If you don't apply this conversion, you'll find your model improperly rotated following import. If this happens to you, double-check these settings.

Use Settings for Render: Enable this option.

Object Types: Select Mesh, Volumes, Curves.

Geometry: Enable UV Maps, Rename UV Maps, Normals.

Subdivision: Set to Best Match.

Rigging: Enable Armatures (if you have rigged and animated your

model).

Materials: Select USD Preview Surface Network and Export Textures.

USDZ Texture Downsampling: Set to 1024px or up to 2048px (the

largest size acceptable for iOS QuickLook).

Update File Extension: Change the export file name extension

from .usdc to .usdz.

If no issues are encountered after export, you should be able to view your model in Augmented Reality on any iOS device. Open your exported file from iCloud, send it as an email, text, or AirDrop to another device to view.

Setting Up Xcode and Reality Composer:

The latest version of Xcode doesn't include Reality Composer, as Apple has shifted their focus to the Vision Pro. You can still access the Augmented Reality Tools for iOS devices, with some additional steps.

Step-by-Step Instructions:

Download the Latest Version of Xcode 14: Download from the provided

link: https://developer.apple.com/download/all/

NOTE: You'll need to create an Apple Developer Account (it's free) to access the above link, or using this direct link: https://download.developer.apple.com/Developer_Tools/Xcode_14.3.1/Xcode_14.3.1.xip

Extract and Rename The Older Version of Xcode: Rename Xcode.app to Xcode14.app and place it in your Applications folder.

Open Terminal on Your Mac.

Open the Applications Folder in Finder.

Drag the Xcode14 App into Terminal: This will automatically add its path.

Add to the Path: Next to the path, add: /Contents/MacOS/Xcode.

Full Command Example: The command will look like:

/Applications/Xcode14.app/Contents/MacOS/Xcode

Run the Command: Press Enter to run the command.

You should now have access to Reality Composer in Xcode. Click on the Xcode menu on the task bar, then click Open Developer Tool, and then click on Reality Composer.

Learn more about using Reality Composer here: https://developer.apple.com/documentation/realitykit/realitykit-reality-composer

Learn more about Apple Reality Kit and ARKit here: https://developer.apple.com/augmented-reality/tools/

BONUS: Generative AI and 3D

Tripo AI (https://www.tripo3d.ai/app) is an advanced generative AI tool that allows for both text-to-3D and image-to-3D model generation. This tool offers users an intuitive way to create complex 3D assets with minimal manual input, simply by describing what they need or providing a reference image.

Key features:

Text-to-3D and Image-to-3D Conversion: Users can input a detailed description or upload an image, and within seconds, the AI generates a draft model ready for refinement.

Prompt: "A pineapple-hedgehog with spiky fruit armor and leafy quills."

https://tripo3d.ai/preview?share=9a57357e-6262-469c-afb1-c7af74d92c93

Prompt: "A 1980s sci-fi robot stylized as a Nintendo NES product."

https://tripo3d.ai/preview?share=a08a55cd-9e66-48a5-be3d-85a26160e461

High-Speed Generation: Tripo’s AI processes are optimized for efficiency, allowing users to generate detailed models in a matter of seconds, ideal for prototyping or quick visualizations.

Customization Tools: After generating a model, users can adjust topology for increased details, or apply stylization, such as voxels.

Seamless Integration: Tripo3D supports a variety of export formats like .usdz .obj and .fbx, making it easy to import models into Blender and other software for further editing.

Generate full texture maps with PBRs: includes generation of PBR textures, adding even greater details beyond the geometry.

Automatic rigging and basic animations: Applies a basic animation rig to generated models and simple animations, such as a running character, to the model geometry.

Downsides:

Imprecise generation: just like AI image generators, results are unpredictable and often wrong.

Costs: Using this tool will require a membership plan, and has limited monthly credits, which limits usage.

CREDITS:

Thanks to all of these wonderful educators and content creators who continue to inform and inspire me throughout my 3D journey. Preparing this lecture required lots of time and consideration for how to condense what I’ve learned over the last five years into something I could demonstrate in under 2 hours. This wasn’t easy, but I had many fantastic resources to pull from.

If I’ve left anyone out, please leave a comment so I can include them here:

Ashley Deal and Raelynn O'Leary — CMU School of Design Faculty and Founding Partners at Dezudio http://www.dezudio.com

Phil Eichmiller — Principal Software Engineer at Autodesk: https://blogs.autodesk.com/community-journal/2022/04/26/meet-phil-eichmiller-principal-software-engineer/

YouTube Creators:

Reference Files:

Robot model created with Tripo AI

Robot model with corrected orientation

Note: Due to a bug, the robot walking animation doesn’t playback in QuickLook AR for iOS.

Week 15: Final Project Update

This will be my final update for the Studio II project. I feel a complex blend of emotions as I write this. I am relieved to be done. I am also sad to know that my time with this team has come to an end. I consider myself incredibly lucky to have spent so much time working with some truly amazing designers. I don’t know if I will ever experience anything like this again, but I hope so.

Remote collaboration has few perks, and I was lucky to be working with folks who helped to make this experience so much fun

The work we have done this week feels different for many reasons. We had to prepare something for a large and diverse audience, not all of which knew or were familiar with the context of our work. Additionally, we also needed to use this time to tie up remaining loose ends—we needed to reach an end state where our process could feel somewhat conclusive.

Our efforts were just as collaborative as ever, as we divided up the labor of our remaining tasks. I was incredibly reassuring to know my team members strengths and capabilities. Knowing who was working on a particular task was reassuring. For my part, I was busy scrubbing through a timeline in After Effects, rapidly assembling visual representations and edited footage to make a convincing newscast from the future. Considering the constraints of remote collaboration, I’m very pleased with the final product.

I have continued to ruminate about over this notion that the future is something we cannot predict, but rather something we build through imperfect knowledge. I question the power our team has to influence this process, not because I lack the confidence in our shared abilities —as I said earlier and often, I’ve been working with an amazing team— but more of a concern around consequences of inspiration. Our process was far from perfect. The vagaries of a pandemic distorted every effort. The educators we sought to connect with were terribly busy. Our own team suffered from fatigue and sleeplessness as we juggled future careers and other academic expectations. The complexity of this topic is well beyond the scope of fifteen weeks of diligent inquiry.

I cannot speak for the entire team, but I know that for me personally our exploratory research was the most intimidating phase. It was immediately clear that we were engaging in a very difficult problem. Education intersects with so many other areas of study. It is a problem of policy, culture, funding, methodologies, and it is weighed down by a history of systemic inequality and racism. Generative research methods were the biggest surprise. I was astonished by what could be gleaned through a participatory process. Including educators in the generation of concepts was exciting, and I wish we had more time to engage in this work.

Our final concepts are a reflection of many perspectives and early prototypes generated by K-12 educators

Every phase also felt too short. We needed to move on before we could fully digest what we were learning. Nevertheless, I stand behind the work we have done, because I know it represents the best we had to offer. I’ve known that design is a messy process long before my time at CMU, but I now have a much clearer sense of what it means to engage with that mess and to assemble something coherent. This work is not easy, and it is never, ever truly complete. The deadlines for a design project function like the layers of silt in a fossil record. The strata of every layer represents a progression with no clear ending or beginning. We can always dig deeper.

I hope these artifacts will inspire others as they have inspired us.

Our team has assembled a project homepage. There you will find more comprehensive information about this work, the final outcome and documentation. Check it out!

Week 13: Artifact Generation

We’ve began to generate assets for our final artifacts. This should be an exciting time for us. For the last 13 weeks, we’ve been living and breathing the problem space. The future of Portland Public Schools is not a matter of fate, it is something that will be built — not only designed, but also transformed by external forces and deliberate interventions. This work and our team’s research are only one tiny piece of this larger unfolding process, and we cannot know what impact (if any) will come from what we have done.

On some level, I cannot help but feel a little bit sad as we conclude this work. I have a very real sense of the scope of this issue and understand that fifteen weeks cannot generate anything conclusive. Nevertheless, we must honor this process and the deliverable. There is an underlying contradiction in this work. What this project calls for is “bold humility.” We know that our research is not conclusive, we also know that without bold presentation, we cannot inspire meaningful change or the greater vision by Prospect Studio.

Our primary concept is a news story about PPS holding their first ARC summit, and what it means for the future of Portland schools and teachers. We can use this medium to communicate the most salient details while glossing over the more bureaucratic aspects of our system level thinking. For secondary artifacts, we’re thinking about “swag” that is typical for a professional conference, as well as a custom logo for the ARC council.

I’m feeling a lot of pressure to resolve these artifacts to the highest fidelity possible. I know that the success of this project rests somewhat on our ability to persuade others, and we cannot know how this work will be interpreted if the artifacts are not convincing or feel too generic. I’m also worried that we have spent so much time working on the particulars that we haven’t given ourselves room for making these things.

I wish that we had a better sense of what is expected, and how craft will be factored into our grade. This is the first time that I’ve taken a studio class where nothing was made until the last two weeks. I expect that our team will be evaluated on the strength of our research and the clarity of our concepts, but as a studio class, I cannot shake this feeling that we should have been crafting prototypes along the way.

My hope for this week is that the momentum of making and the joy of purely creative pursuits will have a feedback effect to keep us motivated through this final push. I’m excited about the potential for the project even though we are still grappling with an incredibly high degree of uncertainty.

Week 12 Update: Evaluative Research Presentation and Reflection on Reaching The Project's Final Stage

This week our team presented our evaluative research to Prospect Studio (Fiona and other representatives were asynchronous for this session) and our guest, Arnold Wasserman. This presentation is the last before our final deliverable, and represents the conclusion of our research phase. While there are some loose ends for us to address (and further evaluation of our concept has not yet been attempted), we are now in the early stages of artifact synthesis.

The last few weeks have helped our team to understand the importance of user evaluation, what strategies do and do not work well in a remote/online context. In particular, we learned that building a survey is a miniature design project unto itself. The creation of an interactive system, and evaluating the results required significant labor up front and a lot of uncertainty throughout. Nevertheless, I feel that our team was successful in achieving specific goals.

I’m proud to say that we managed to get several different concepts in front of several educators from around the country as well as from within PPS specifically. We successfully navigated and sorted through feedback to gauge overall patterns of responses to several concepts as well as system-level evaluations. We managed to coordinate and divide our labor effectively, and communicated asynchronously as we brought key components together. This process was mirrored in the creation of our latest slide deck for Wednesday.

We received helpful feedback and challenges to our concept following our team’s presentation. As previously has been the case, our team had a good sense of who ought to respond to specific questions, since our divided labor has granted each team member some degree of specialization and familiarity with the topic we’ve been researching. Specifically, Arnold Wasserman was curious about how our artifacts could communicate these concepts in a compelling and persuasive manner. Arnold Wasserman pointed out that school boards and the people elected to them, have a tendency to be self-serving, to the detriment of the districts they represent. He questioned how our concepts would overcome the significant obstacle of implementation, especially given the fact that school boards and public officials hold the levers of power and the teachers are functionally an underclass in the United States.

This is something I’ve been thinking about since the beginning of this project, and I related back to these thoughts in response. My ideas are largely based on the work of Donella Meadows, and her famous essay on leverage points.

PLACES TO INTERVENE IN A SYSTEM

(in increasing order of effectiveness)

12. Constants, parameters, numbers (such as subsidies, taxes, standards).

11. The sizes of buffers and other stabilizing stocks, relative to their flows.

10. The structure of material stocks and flows (such as transport networks, population age structures).

9. The lengths of delays, relative to the rate of system change.

8. The strength of negative feedback loops, relative to the impacts they are trying to correct against.

7. The gain around driving positive feedback loops.

6. The structure of information flows (who does and does not have access to information).

5. The rules of the system (such as incentives, punishments, constraints).

4. The power to add, change, evolve, or self-organize system structure.

3. The goals of the system.

2. The mindset or paradigm out of which the system — its goals, structure, rules, delays, parameters — arises.

1. The power to transcend paradigms.

In particular, look at points three and four: the power to self organize and the goals of the system are key to understanding the forces necessary to reform PPS to more closely resemble the vision from Prospect Studio. I agree with Arnold Wasserman’s observation regard the school boards and policy makers, but I also see a real opportunity with this difficult and problematic group. They hold the levers, so we need only find a way to align their goals with the reforms we envisions for PPS.

If we accept the premise that politicians and school board members care about their own tenure and individual interests, and do so above all other considerations, then what we need to produce are artifacts that provokes the parents and registered voters of that school district. Once an activated and inspired public knows what they desire, they will vote for and ultimately elect representatives who promise to bring that vision to life. We have seen this on matters ranging from civil rights and infrastructure, to economics and war. Politicians will follow public pressure to keep their own seats warm.

Arnold seemed pleased with my answer, and suggested that our topic relates directly to the fate of our nation’s democracy — so, no pressure at all!

This weekend our team held three meetings to jumpstart this process of future artifact synthesis, and we have been more or less fruitful in this endeavor. It’s exciting to be in the final stretch, but our team has been struggling to maintain momentum lately. The demands of presentation weeks, and the rush to complete our research, often requires long hours, multiple zoom meetings outside of class, and many late nights. This has began to produce negative health consequences for our team.

We’ve been intensely looking at teacher burnout, but have also been confronted with the burnout of a pandemic, and the rigorous academics of a graduate program. Illness, headaches, and signs of exhaustion have crept into our team dynamic, and I’m concerned about what this will mean now that we are heading into the final push for this semester. What we really need at this stage is that spark of creativity and divergent thinking. It’s hard to do this level of work while also pushing up against the steady hum of stress and exhaustion.

Brainstorming session, mapping events and trends to eventual implementation of key ARC concepts

I think it was a gigantic error on the part of CMU to breakup our spring break. I understand the rationale, and the concerns around travel, but this alternative strategy of giving students a random Monday or Tuesday off has not provided the benefits of time off to rest. I simply cannot “sleep faster” when given a 24 hour window, and I cannot catch up when one day of classes is omitted from an otherwise packed calendar. I’m burned out. I’ve got this strange ringing in my ear that won’t let up, and I’m having more trouble concentrating than at any other time this year.

Languishing in the fog of constant deadlines, constant tasks, constant meetings, constant emails, Slack messages, updates, etc., etc., have left me depleted. It has also sucked the joy out of doing this work. I hope this terrible mental and physical state doesn’t last, because I don’t see how I can be productive while feeling this way.

Week 11: qualitative evaluation of concepts

Our online survey is now underway, and while this virtual format isn’t exactly like so-called “speed dating,” we are hoping that it will be able to serve a similar purpose for our research. Creating a meaningful online experience for our participants was a tall order, especially with such tight constrains. There are many risks when created a fully automated and hands-off system. Not being there to clarify or to address questions or concerns in realtime was something we needed to accept as a trade-off. In exchange, we have a dozen unique participants ranging from 2 years to 27 years of experience, and from various districts around the country.

So far, the majority of responses have been from an online community of English teachers, so our data is skewed toward this perspective. On the plus side, English teachers provide excellent written responses. To avoid the pitfalls of statistics and quantitative analysis, we designed an online survey with open text fields, and we framed our questions around hypothetical scenarios. This would provide us with reflection and insights into how teachers imagine these concepts for themselves, and what perceived deficiencies come up for them in thinking about these systems in action.

Screenshot of survey responses, exported into a CSV file

The last 24 hours in particular have been very exciting, as we finally gained access to online educator communities. This process has been slower than wanted, but we first needed to fully develop our survey before we could deploy it. This process in and of itself was a design challenge.

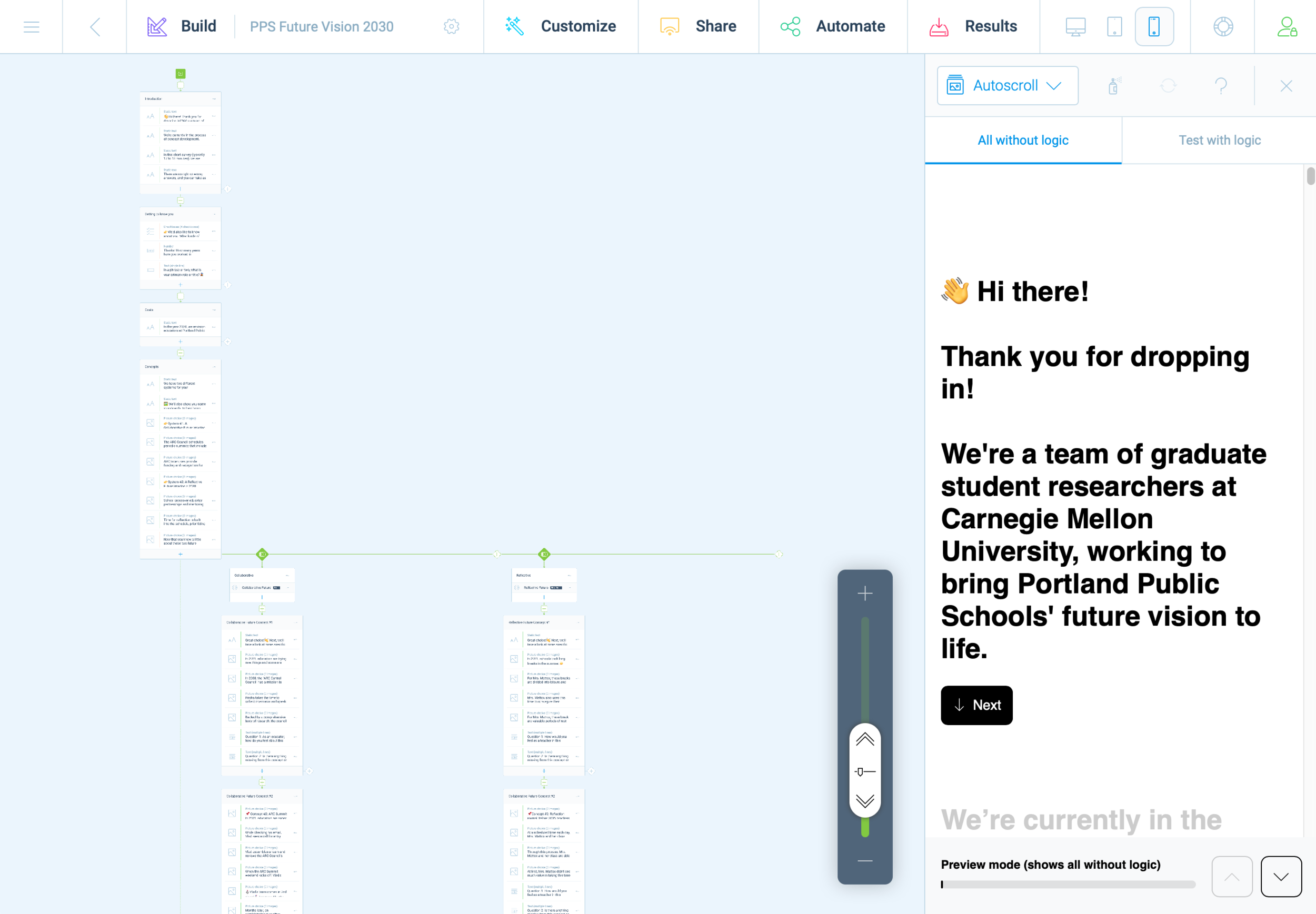

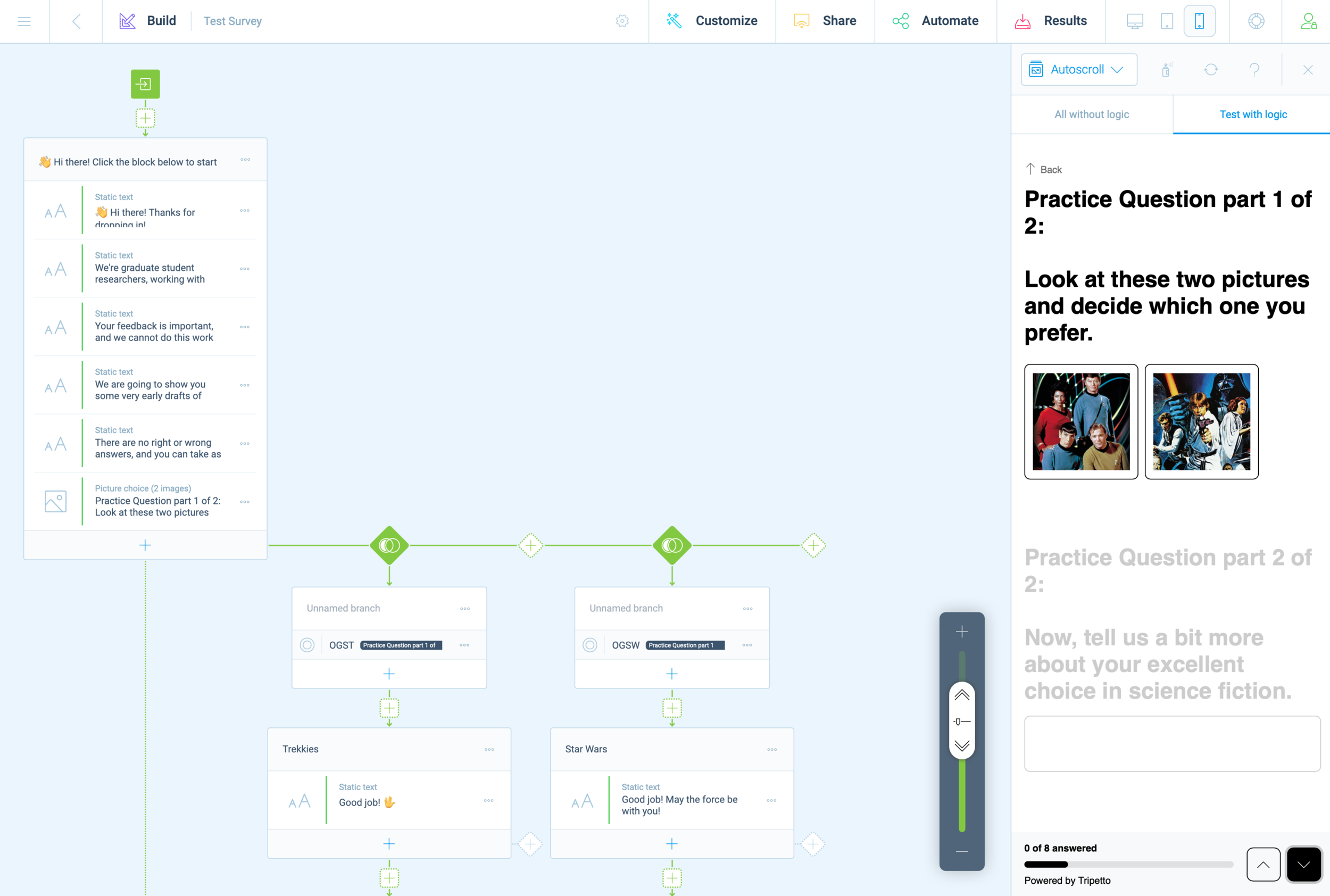

Last weekend, we decided to use the Tripetto platform. This gave us the same logic capabilities as TypeForm, but without any additional costs. It became clear almost immediately that we would need to prototype and refine our survey before receiving teacher feedback, and this effort was highly collaborative.

With multiple teammates, it was possible to divide this task into several areas that could be worked on independently and in parallel. We first decided on a basic structure and strategized the division of labor. Carol worked on the text/content based on a logic diagram we crafted together. While Carol crafted this outline, I created a mockup version in Tripetto. Without access to finalized concept sketches, I took some poetic license.

Screenshot of 2nd iteration prototype survey

As Carol and I worked together to refine the text copy, Cat and Chris worked together to create images and descriptive text for our participants. Once all of this content was ready for Tripetto, we began doing test runs, trying to break the experiences. This revealed some quirks with Tripetto’s logic functions and some of the less apparent features.

There are a few honorable mentions; Tripetto has a lot of subtle features that we often take for granted in other online experiences. Things like placeholder text, required fields, multiple choice radio buttons, checkboxes, multi and single-line text boxes. During the refinement phase, these features became essential and it was exciting to discover them—only after they were deemed essential enough to be worth the effort.

The minimalist UI of Tripetto made these features less evident, but not too hard to locate or execute. From start to finish, this experience felt a little shaky and uncertain but viable.

I often found myself this week grinding away on the platform, slipping into a state of mind that Mihaly Csikszentmihalyi describes as “flow.” In other words, creating a survey on Tripetto wasn’t easy to use, but just challenging enough to keep me interested in working through obstacles. I think that what helped support this effort the most was building models within platforms where everyone on the team is already fluent. For us, this was primarily Miro, Google Docs, and Sheets.

Screenshot of two representations of the survey, carried across platforms (Miro and Sheets)

First impressions matter, and we didn’t want to put out anything that wasn’t necessarily a work in progress. Even with this in mind, we did have a few last minute tweaks as we adapted our survey to maximize pulling power with other social media environments.

Arnold Wasserman’s desk critique was incredibly valuable for our team, as his feedback helped us to consider the importance of our survey as a communication tool. He recommended that we make the implicit, explicit, to directly communicate to our participants what we expected and why. We were encouraged to explain what questions we were asking, and to share this openly. This kind of transparency can be tedious, especially in text-based systems. I took this to task and simplified statements throughout the entire experience.

This gave the survey a personality all its own; like a casual and curious friend, we asked about specifics but with little pressure. We kept things open.

Open data cannot be calculated, it must be evaluated for patterns. Next week will be a scramble to synthesize patterns and new insights as we work to finalize system concepts into well defined parameters. We hope that through this process we will also identify opportunities to produce relevant and compelling artifacts (our final output/deliverable).

It still feels like a risk to be so far into a process and to still not have a clear idea of what it is we are making. We instead draw our assurances from what we have already made: an index of relevant articles, interview notes, countless diagrams and visual representations of high-level abstract concepts and maps at almost every level of visual fidelity imaginable, hundreds of presentation slides, dozens of pages of reflective text, and months worth of slack messages, shared links, and drafted emails. We created interactive digital workshop spaces and protocols for our participants, and archives with 256-bit encryption.

When looking at the collective volume of effort from this team, it’s difficult to imagine that we wouldn’t make something meaningful in the end. Is that too optimistic? Ask me in a month.

Week 10 update: Speed dating and concept evaluation

We had a somewhat irregular week for studio II. After our presentation, our team regrouped and strategized on how we might conduct the next phase of our research. We started out with just two concepts (an ARC educator “hackathon” and a community-promoting “ARC awards” program), and while our team felt confident that these concepts were feasible and desirable to addressing our problem space, we still had a lot of open-ended questions that would require further inquiry. Additionally, we became very concerned with the potential opportunity costs of not exploring more alternatives.

To address this concern, we decided to return to our primary research and synthesize niche problem statements that my provoke additional concepts. This went extremely well, and we now have more than a dozen concepts ready for evaluation. We’re excited to get these ideas in front of educators, but this remains to be a substantial obstacle to our process.

We consulted with Hajira and Sofia about our concerns, and asked how we might convert the highly synchronous activity of “speed dating” to a more online and asynchronous form. They recommended Typefrom and while this option was appealing, it came with a few drawbacks. The ultimate dealbreaker on this platform was the price. It costs $40 to enable the features that actually make the platform more useful than free products such as Google Forms. After some digging, I found a free alternative (they literally marketed themselves as such). Tripetto offers logic and branches that will enable our team to structure paths for our survey to tailor the individual experience. This is pretty huge, considering the scarcity factors our team has struggled with since the beginning of this project.

Despite this progress and excitement for next steps, I’ve personally struggled with motivation this week. I know that a lack of regular sleep and some external stressors are partially to blame, but there are many factors contributing to this. It’s been difficult to process (cognitively and emotionally) what comes next for me.

This week, I received my cap and gown, a diploma frame, and a few other artifacts to commemorate my time at CMU. I’ve been in school since January of 2014, and I feel incredibly lucky and grateful for this opportunity. To date, academics has been my longest career. I have spent more time being a student than my entire Navy enlistment, or my time working at Intel as an engineer. Each chapter came with its own struggles, failures, and success.

Each made an indelible mark on my psyche and personality. I could never imagine in my wildest dreams that my educational path would end here, in Pittsburgh, confined to my shoebox apartment, a deadly virus burning down countless lives while I indulge in high-level theories. I owe so much for this good fortune, and I do not know how I will ever repay the world for what it has given me.

It’s not so much that I am procrastinating — I put in a lot of hours this week, especially for this project — it’s that I’m paralyzed, afraid that what we are doing is missing something vitally important yet still unnamed. I also know that 15 weeks is hardly enough time to understand potential futures and their relationships to the current state.

It’s all crushing me down. I feel the weight of an obligation to deliver good work, yet terribly uncertain about this process. I’ve never done such intensive research before, and while I believe these theories and frameworks I’m soaking in (Worldview filters; Voroscone; Archplot structures; CLA; Empathy mapping; Participatory, Generative, Co-design, etc.) are helpful and necessary to our work, it’s difficult to know if the way our team applies these unfamiliar methods will yield truly impactful results.

I know that this is a learning experience, first and foremost it is an invitation to fail brilliantly as we discover new ways of making, but without any prior experience with this stage, it’s so difficult to keep my chin up and to believe in my own creativity and ability.

Week 8 Update: Generative Research and Future Visions of Portland Public Schools

We began this week with a guest lecture from Adam Cowart, a PhD candidate in the transition design program. He introduced us to the concept of CLA (Causal Layered Analysis). We used this framework to better understand the landscape of our problem space at Portland Public Schools. Adam described different facets of the problem space through the lens of “litany filters.” To recognize what futures are feasible, we need to understand the triad of history, present, and future, and what elements in our landscape pull, push, or weigh down progress.

We took some time in class to reframe our insights through this framework, and began synthesis of potential elements to build a bridge toward the future vision created by Prospect Studio. This process began slowly, but after some heavy lifting we began filling out the diagram with great enthusiasm! It was refreshing to revisit our secondary research (which was already categorized under a STEEP-V framework). It was revealing to see visually how much further we have advanced our understanding of this problem space since literature review and background reading.

Outside of class, our team was busier than ever — working to adapt and overcome the obstacles we’ve encountered in our generative research phase has not been easy. I’ve struggled to support these efforts. The external factors of my personal and professional life have been an ongoing source of strain. I feel so much gratitude to the support and encouragement I’ve received from this team, and this week I felt a great deal of pressure to reciprocate.

Sample of generative research protocols

This effort to pay back the generosity I received (when I needed it most) began with a complete/comprehensive draft of our protocols for generative research, and the specifications for our workshop. Working with Carol, we delivered this to the team ahead of schedule. It was necessary for us to draft new protocols and workshop exercises to include a broader audience, outside of Portland Public Schools. We found that last week was somewhat of a dead end for seeking participation from our intended stakeholders (administrators and educators at PPS).

For our workshop, we wanted to know how different stakeholders perceive their relationships with counterparts, learn what different stakeholders prioritize and why, gain deeper understanding of how educators think about the future of public education, and to explore and define preferred futures.

We conducted three separate workshop sessions with educators outside of PPS. This included neighboring districts of PPS (Gresham-Barlow), as well as out-of-state educators. This approach allowed us to glean insights regarding that which is common in the US public school system, and that which is more specific to Portland. While this adaptation is not without its risks to skewed data, it is far more preferable that to remain without any additional insights beyond our primary research activities.

Screenshots of workshop activity

This was my first experience with executing participatory design with stakeholders and it has been such a rollercoaster of emotions. Since Carol and I worked on the protocol together, it was only logical that we also create the visual and interactive components for the workshop. We iterated on our initial concept by practicing with our own team, with each member taking a turn roleplaying as a participant. This helped us to work out the kinks and refine details before putting anything in the hands of our participants.

The first workshop with a real participant was very revealing. Having access to their thought process in real time, their visual associations, priorities, and ideas about the future were peeled back in layers, digging deeper into their lived experiences than we ever got through primary research and conversational interviews. Even the generation of simple sketches gave us glimpses into their inner worlds. I now question how important it was to conduct traditional interviews in the first place. Workshops are just so much more dynamic and active than interviews, and I consistently came away feeling more connected to the participants and their experiences.

This weekend was highly reflective. With new insights in hand, we spent over five hours evaluating what we discovered. There was so much for us to consider and it was only once we had the chance to pick it all apart together as a team that we could begin to make sense of it all. Many of our initial assumptions were blown out of the water. Our newfound perspective gave us a real sense of how important relationships are in the field of teaching. We also learned that technology is probably the least important factor for educators — with the exception of a desire for students to have high-speed internet at home, there was little to no interest in improving access to technology generally.

I’m still getting used to applying so many different approaches and methods so quickly. I feel like I’m only occasionally operating with a sense of clarity. There has been prolonged fuzziness that’s difficult to describe or ignore. It seems as though new insights provoke deeper questioning, while offering little in the way of certainty. I think this is just the experience of progressively revealing collective and individual ignorance. Before learning enough to act decisively, we must first gaze into the vast abyss of what we still do not know.

Kinetic-friendly spoon project Mega Post

That’s a wrap! It’s certainly been an interesting semester, but now I am ready to put it behind me. Reflecting on the spoon project, I have some final thoughts and observations. First, I want to thank the fine folks at CMU School of Design. From the amazing and hardworking faculty and graduate student cohort, I have had nothing less than inspiration and encouragement throughout this entire process, despite the obvious challenges of working remotely.

Rendering of sixth and final (?) spoon design. I pulled the kitchen design (Pierre Gilles) and bowl (Damogran Labs) from GrabCad.com. The spoon and coffee mug are mine.

This project was divided into two parts: the first part focused on exploring different ways of prototyping and making. This was described to me as an informal way of A/B Testing for methods. The second part involved the deliberate iteration of prototypes through user testing — a challenge in the context of a global pandemic and social distancing. To make the most meaningful design choices possible given limited resources, I decided to leverage the power of physical simulation to supplement the making of physical prototypes.

There are a variety of 3D software tools that offer some degree of physical simulation. For this project, I selected Maxon Cinema 4D R20 (Educational License) and Blender as my two ways of making. I chose these because I already am familiar with Cinema 4D and understand know how to manage a workflow in that context, because Blender is open source and free for anyone to use, and both programs work under MacOS and Windows environments (my rendering workstation is a Hackintosh with multiple operating systems, which grants the flexibility to overcome certain technical limitations). My initial experiments with Cinema 4D were… not great.

As you can see, there are “physics” happening here, but they are not anything close to the physics of the real world. This is not “real world” physics, this is Asshole Physics:

Zachary "Spokker Jones" Gutierrez and I came up with the term "Asshole Physics" when we were discussing the game and the physics models it employed. Basically there's a lot of crap you can knock over and kick around, including dead bodies, buckets, cans, and little sections of drywall which are standing around in the middle of rooms for no obvious reason. Zachary casually mentioned, "I have made it a point to knock over every fucking thing in that game. I am living out my fantasies of being a giant asshole," and I responded by stealing his "asshole" comment and claiming that I made it up. Thus "Asshole Physics" was born.

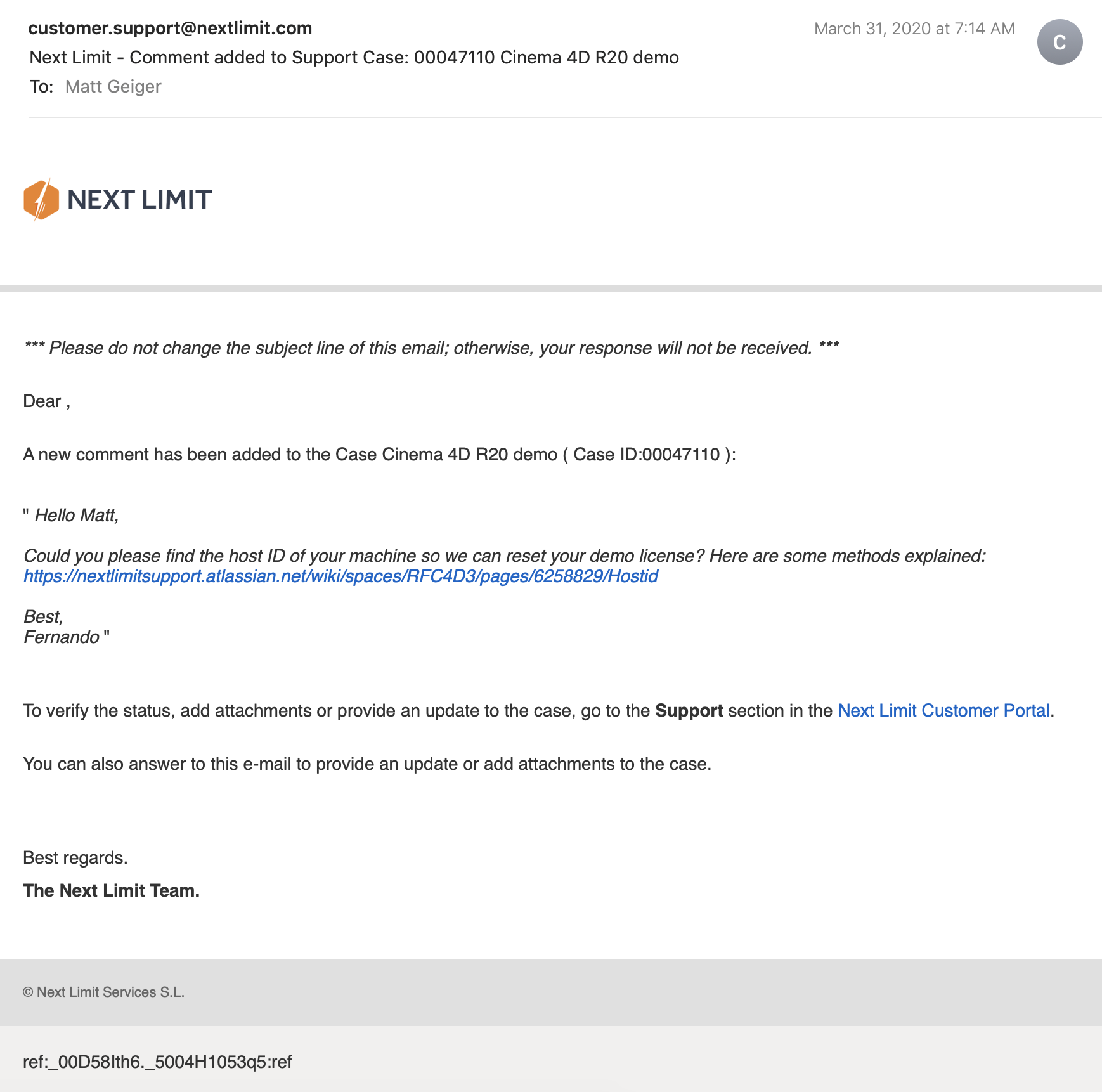

Without more sophisticated plugins to simulate fluid, Cinema 4D R20 is only “out of the box” capable of non-newtonian semisolids. I can make stuff bump around and “squish.” I can have a 3D character micturating on the side of a building. I can create the appearance and illusion of something like a fluid, but with such restrictions, I could not realistically evaluate my spoon designs. I explored my options and found that Next Limit’s RealFlow plugin would meet my basic needs. Best of all, they offer a free 30-day trial! My initial excitement quickly waned after the plugin failed to install and activate on my system…

(This email chain is long and covers a week of back and forth with customer service. I am including the entire conversation as a way to recreate my experience. While this may not directly relate to the scope of this project, I still believe that there is value in documenting the unexpected problems that crop up when trying to do something new.)

It took a week to finally get everything sorted with the demo. During that time, I began to explore option B: Blender.

Blender is a free, powerful, open source 3D creation tool. Best of all, it includes the mantaflow fluid simulation engine (since version 2.8). I have worked with Cinema 4D on other projects, and have become fairly comfortable with the interface. Given my experience with Fusion 360, Inventor, and C4D, I knew that I would need to overcome a learning curve before I could use this software to meet my needs for this project. Fortunately, I was able to find a spectacular tutorial series for beginners.

If you want to read more about my experience with the tutorial, click here.

This tutorial was ideal because it involved exercises that helped me learn how to use the interface, and covered several different workflows. I was really impressed with Blender’s node-based material system and procedural textures. You can work strictly with parametric modeling, or you can discretely modify mesh geometry to create highly organic and imperfect forms. I’m excited to work with Blender on future projects. It’s a very exciting time to be working in 3D.

While working through these tutorials, I began sketching and working in Fusion 360 to craft my first spoon designs for part 2 of this project. You can read more about this experience here.

Takeaways from Part 1

I really appreciated the responsiveness from the team at Next Limit. Clearly there are problems with the software’s implementation of their product’s copy protection. This is an all-too-common problem in the world of software. Programmers gotta eat just like everybody else, and we certainly should make sure that the talented and hardworking folks behind the code are able to put food on their table at the end of the day. Piracy can deprive a small business of the necessary revenue to keep the lights on, so I am absolutely sympathetic to this reality and what risks are involved when you release your software for demo purposes. Getting people to pay for something that they can easily get for free is a challenging proposition. At the same time, you cannot realistically expect to get customers to pay for software if they cannot try it first. Ultimately, this one week of back and forth with customer support was a critical loss. I never completed a side-by-side comparison of fluid simulations. While I did eventually succeed at installing and using RealFlow to do fluid simulations, (and was honestly impressed with how easy it was) I did not, however, have enough time to setup a comparable simulation to evaluate spoon designs. My trial expired about a week ago, and I see this aspect of the project as a lost opportunity. If Next Limit applied similar licensing practices as Maxon (verify it through .edu email address), they could offer an educational package of their RealFlow plugin.

Blender really came through for me. The learning curve was aggressive, but not impossible. While I found mantaflow to be a respectable and entirely capable fluid simulator, it was not without its own share of issues. I spent a lot of time making granular tweaks to improve the fidelity of my simulations, while also using the observations from my simulations to inform design decisions for my spoons in part 2 of this project.

Part 2: Design Iterations Based on User Testing

While this project required user testing and design iterations based on feedback, I decided to limit the user evaluations to address handle shape and the spoon’s overall dimensions. This was not an arbitrary decision or an excuse to focus on physical simulation of fluid dynamics (with user testing as an aside). No, this decision was based on the nature of the course from which it was assigned: Prototyping for Interaction Design. This semester I have have been focusing on designing for interaction (arguably, all designers do, at some point in their process, focus on this aspect). When thinking about the tools we use (to eat food) as a system, it is important to consider the touchpoints involved. The handle of a spoon is a non-trivial component. It can take on many forms, and naturally includes affordances. How someone holds a spoon, and how easy it is for them to use it are central to the evaluation of the design.

The iterations of design were highly generative in nature, inspired by both user evaluations and physical simulations, I maintained a homeomorphic continuity: treating the initial shape as an elastic form to be molded and reshaped to maximize performance. Knowing how a concave shape might be optimized to perform under rapid movement — I wanted to create something that would be useful, and the physical simulation of fluids facilitated a means of evaluation — is only one aspect of a more complicated interaction, and this test alone could not fully address human needs. When physical form is designed and directed to improve user interaction (and physical properties are given equal consideration), it is possible to create a truly useful tool. I realize that this is a very technical description, but it is easier to understand when properly visualized. I have rendered a compilation sequence to show how this spoon shape evolved to its final(?) form (I am still considering a physical prototyping stage for this project over the summer).

A sequence of fluid dynamics tests designed to evaluate fluid retention of concave forms. Carnegie Mellon University, School of Design, Prototyping for Interaction, Spring 2020.

Toward the latter half of this sequence, you will notice a change in colors (for both the liquids and spoons). I decided to differentiate the final rendering sequences as these were based on user evaluations. The colors chose for these final sequences are based on the color tags used for the user test:

These printouts are derived from DXF vector images exported from Fusion 360. The designs shown are oldest (top) to newest (bottom). The fifth design (blue) is rendered with a blue body and green liquid.

I printed and mailed the paper prototype to a potential user suffering from ongoing hand tremors (my partner’s mother). I sent this without written instructions. Instead, I only provided different color tags to facilitate feedback. My user let me know that the red spoon handle was in the “Goldilocks” zone in terms of size and shape: not too big, not too small, not too curvy, not too straight. Using this feedback I constructed the sixth and final (?) form — see the first image of this post.

The user test included a direct side-by-side comparison with existing dinnerware.

Before developing these simplified paper prototypes, I also experimented with ways of making more three-dimensional forms that could be sent in the mail. While this novel approach showed some potential, I was concerned with how user error might complicate or (even worse) bias feedback. Still, these paper prototypes helped me to better understand and interpret the scale of my 3D models.

Final Thoughts

This project still feels somewhat incomplete. Perhaps this is because the generative design process itself can always demand further iteration, or maybe it is because I have not yet created a physical prototype that can actually be tested as an eating instrument. Maybe it is only because there were still a few “rogue droplets” (grrrrrr) that I simply could not keep contained with the completion of my sixth iteration. Whatever the net effect might be from these various shortcomings, I am pleased with the learning opportunities that were presented throughout this exploration of design.

Were I to continue with this process, the next steps would be to 3D print the latest shape using a food-safe material (there are a few third-party vendors that offer this service). I would then ship that latest design for further user evaluation. I believe that there are still many additional iterations necessary before I could defend having created something that satisfies the criteria I set out with this project (i.e., a spoon that overcomes the challenges of involuntary muscle movements and essential tremors).

If I were to collaborate with others, I would also want to evaluate the ecological and economic impact of such a device. How might we go about manufacturing to appropriate scale? How might additional user tests with a wider audience influence the existing form? There remains many unanswered questions and a newfound respect for the power of generative design.

Bugs in the Blender

I have continued to have luck exploring the Fluid simulations in Blender, but this process has not been without its quirks. I recently encountered a strange issue related to Particle Radius settings

Particle Radius

The radius of one liquid particle in grid cells units. This value describes how much area is covered by a particle and thus determines how much area around it can be considered as liquid. A greater radius will let particles cover more area. This will result in more grids cell being tagged as liquid instead of just being empty.

Whenever the simulation appears to leak or gain volume in an undesired, non physically accurate way it is a good idea to adjust this value. That is, when liquid seems to disappear this value needs to be increased. The inverse applies when too much liquid is being produced.

What does this look like in practice? My most recent simulation actually seems to produce fluid as the scene progresses.

Nevertheless, I was able to gain critical insights into this form and will continue to iterate new designs. This is being done in conjunction with paper prototyping. These forms are less sophisticated, but still provide valuable information about how users will experience and interact with this flatware.

Spoonfuls of updates

This week was packed full of progress on multiple projects. I received feedback for my group’s birth control information app “MyGallery.” Our work was even featured on CMU’s Design page.

Crafting an iconographic representation for the withdrawal method was my proudest moment.

I’ve continued to explore fluid simulations with Blender. I’ve ran into some technical hurdles: Blender 2.82 uses a variety of protocols to leverage GPUs for rendering and computation. It offers an AI-driven denoiser (Optix), CUDA path tracing, and OpenCL. My MacBook Pro has an AMD Radeon Pro 5500M GPU as well as the option to plug in a Radeon Frontier Edition (first generation Vega) eGPU on Thunderbolt 3. Plenty of GPU compute power in either configuration, but there is a snag: MacOS 10.15 (Catalina) has deprecated OpenCL in favor of Metal 2+. CUDA and Optix are proprietary to nVidia GPUs. Apple hasn’t shipped a Mac with nVidia GPUs since Kepler launched (GeForce 700 series). Blender supports AMD ProRender, but I found it was terribly unstable.

I could easily slip into a tangent about how unfortunate the breakup between Apple and nVidia truly is, but I will spare you.

My current workflow involves queuing some tasks to my desktop, running Windows 10. The GPUs are dual Radeon VIIs. Unfortunately, I found that rendering on Blender is unstable when both GPUs render in parallel. No problem, since I can free up the other GPU for Folding@Home (a hobby of mine that has exploded in response to COVID-19). Who would have guessed that a global pandemic would boost a distributed computing project to exascale?

Despite these obstacles of platform compatibility, I have made significant progress on my simulation-based research. It is difficult to understate how exciting this project has been for me. For some context: the ASCI Red supercomputer (at the Sandia National Laboratories) was built in 1996, and was the fastest supercomputer in the world until 2000. It was the first computer to achieve true terascale computing (one trillion floating point operations per second). I built my first terascale computer in 2013. This was shortly after leaving my job at Intel. There was something very gratifying about building a computer with a CPU I helped manufacture. GLaDOS G4 (you can see the project here, scroll down to “Everything Else”) was built with a GeForce GTX 780 GPU and Intel Core i7 4770k overclocked to 4.5 GHz. It ran nearly silent and fit inside an up cycled Apple Power Mac G4 (microATX equivalent) case.

The ASCI Red supercomputer was designed to simulate nuclear weapons tests. Today, I am using a system roughly ten times more powerful to simulate soup spilling out of a spoon. I was inspired to approach this problem by two projects. The first was a 2013 project from Portland State University (my alma mater) to make a coffee cup for zero-gravity environments. they used drop cages and 3D printing to iterate several designs until they had a shape that held liquid. “It wasn’t needed, but it was requested.”

The other project hit me right in the heart.

The S’up Spoon is the embodiment of good design. The design was inspired by deep empathy for a user’s problem, and the solution involves as little design as possible. There are few technologies in this world that we trust enough to put in our mouths. If you can make it in this space, you can make it (almost) anywhere. During the fall semester, Moira and I visited the Carnegie Museum of Art. They had an exhibition on accessibility design, and I was brought to tears by stories of innovation and vibrant improvements to quality of life for people with disabilities. Technology, at its very best empowers people to realize their fullest potential. We can easily get lost in the exhilaration of the complex, but this impulse must not dampen our ability to appreciate the elegance of simplicity. Some problems are best solved by form. I saw many incredible solutions in that exhibition, but this spoon has really stuck with me.

My goal is not to make something better, but perhaps a little bit different. The shape of the S’up spoon is intuitive, and if we had never seen a spoon before, we might conclude that it is the better design over more traditional forms. It is however, under our current cultural context, a strange thing to behold. It looks more like a wizard’s pipe or a warrior’s horn. It is beautiful and ergonomic. I do not intend to elevate those specifications. Instead, my goal is to make a spoon that is inconspicuous while still achieving similar results for users who suffer from motor movement difficulties.

How has my first design faired under simulation?

While I can certainly see the appeal of a long hollow channel, I’ve become increasingly concerned with how this shape my be difficult to keep clean. I can imagine objects getting wedged toward the back depending on what is being consumed. I have began to work on a second iteration with a more shallow channel. Still, this first iteration does fairly well. It is managing to retain most of the 15ml (i.e., 1 tablespoon) of fluid under rapid movement.

I enjoyed this simulation so much that decided to make a rendering:

I have not yet gotten back into Cinema 4D to evaluate RealFlow. Despite the challenges regarding compatibility, I am truly impressed with how powerful this open source software has become with this latest release.

Now that I have established this workflow, I can easily switch out revised designs to test under identical conditions. I’m still not sold on the current handle shape, and I think I can improve liquid retention by tweaking the angle of the lips. The flat bottom (Chinese style spoon) does fairly well, with it’s obtuse angle walls. Next, I will try a concave structure with a wider base for the handle and a more aggressive descending angle.

Prototyping – Part 2

Working with Blender has continued to go well.

I have also been looking at some of the existing solutions in this space:

KFS Easy Eat

http://www.eating-help.com

Liftware, by verily

https://www.liftware.com

EliSpoon

https://elispoon.com

Ornamin - Supportive Cutlery (Parkinson’s)

https://www.ornamin.co.uk/shop/cutlery-set?number=SW24

S’up Spoon

https://www.youtube.com/watch?v=C8nNlWw6KbA

Apex Medicine Spoon

https://www.riteaid.com/shop/apex-medicine-spoon-0233706

I have been sketching and studying these forms in consideration for my own designs.

Some shapes are unappealing because of their associations. These still deserve consideration, as they function well in this space.

This week I will begin iterating designs in Fusion 360. Hopefully, I will finally be able to make use of my RealFlow trial license. I’m curious to see how the “out of the box” settings function with these geometries.

Fluid Simulation in Blender

“Throw off your fears let your heart beat freely at the sign that a new time is born.” — Minnie Riperton

I’ve completed my workflow design for fluid dynamics testing in Blender. Here’s a proof of concept:

Now I just need to figure out RealFlow in C4D…

Dead Star Escape Playtests at XOXOFest 2018

XOXOFest gave me an opportunity to conduct some playtests for DEAD STAR ESCAPE! It’s a four-player tabletop, where space pirates try to escape from a black hole! I got some excellent feedback on this project and still have a lot of work ahead of me, but it was really exciting to see a game play through from start to finish with new players. The play mechanics and rules are ironed out, but I still need to update the prototype and enhance some layout and other misc. concerns.